These FAQ were developed following the July 21, 2021 #FeedbackASAP meeting. If you have any additional questions or comments, please contact jessica.polka@asapbio.org.

Last update: 2022-01-28

- General

- For readers & reviewers

- For authors

- For journals, editors, and publishers

- For funders and institutions

What is public preprint feedback?

Any public reaction to a preprint, ranging from tweets to comments to formal peer review.

Where does it happen?

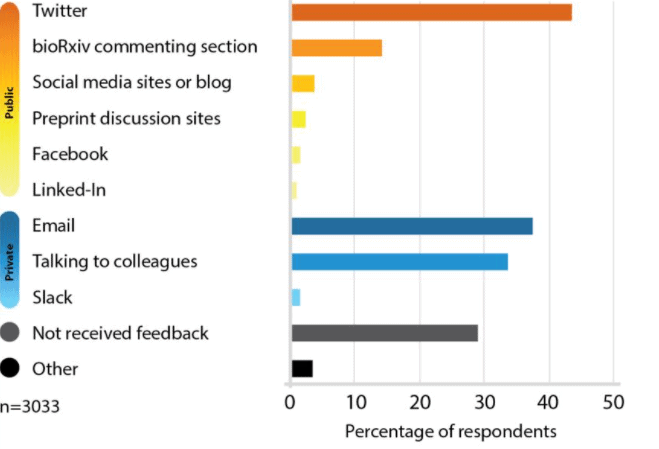

Many preprint servers offer commenting and annotation features. Sever et al.’s 2019 survey (see image) found that public feedback on bioRxiv preprints is primarily received on Twitter and in the comment section. An analysis of bioRxiv comments by Malicki et al. found that 12% of non-author comments resembled journal reviews.

Preprint discussion platforms organize commenting activity into a variety of workflows and enhance the discoverability of feedback. Learn more about preprint feedback initiatives at ReimagineReview.

How can I find it?

Many preprint servers have commenting functionality, and some link to social media commentary or external peer review sites. For example, bioRxiv has created an evaluation dashboard to organize feedback from multiple sources, including social media.

Twitter is a common venue for preprint discussions. If a server doesn’t display tweets discussing the paper, you can input its url as a search term at twitter.com.

Preprint reviews from organized communities can be easily found at Sciety and Early Evidence Base, both of which provide tools to search and filter preprint evaluations. Some reviews are also linked from individual preprint records at EuropePMC.

Wherever you find preprint reviews, be sure to check for an author response to make sure you are considering their perspectives. In venues where anyone can contribute to a stream of feedback (for example, preprint server commenting sections, Hypothesis, or Twitter), look for a reply from the authors. If the review appears in venues that don’t directly support author responses (such as GitHub), you can search for the URL of the review to identify pages that mention it.

What are the benefits of making preprint feedback public?

Any constructive feedback on a preprint, including feedback sent privately to the authors, is beneficial: it helps authors improve their paper at a relatively early stage of its development, and it can include feedback from anyone, rather than the handful of reviewers that might be consulted by a journal.

Bringing that feedback into the open can have several benefits for a variety of stakeholders.

For authors, public preprint feedback can:

- Increase the rigor of scientific work because criticisms and concerns can be validated by others.

- Enable them to publicly rebut any lingering or recurrent criticisms.

- Reduce rounds of re-review.

For readers, public preprint feedback can:

- Provide context and expert evaluation of the work. For example, rapid and public evaluation of COVID-19 preprints helped non-specialists understand new developments.

For feedback providers and reviewers, public preprint feedback can:

- Include more researchers in the peer review process than those invited by journals, including those from underrepresented geographical areas and career stages.

- Enable peer review to be reused by other journals or evaluators, reducing time wasted on rounds of review.

- Act as a sample of your reviewing work, enabling journals to more confidently extend invitations to join editorial boards and reviewer pools

For journals and publishers, public preprint feedback can:

- Identify potential reviewers and editorial board members from preprint feedback they’ve provided.

- Inform the decisions of editors at the triage stage.

- Complement formal peer review reports commissioned by the journal.

What are the concerns?

One major concern surrounding public preprint feedback is it may harm the reputations of authors who receive it. The overwhelming majority of papers are criticized—often heavily—in the process of journal peer review, but this feedback is seldom seen (unless the journal participates in the constructive practice of publishing peer review). Open review may give uncritical readers the impression that the paper in question is less robust than those with no public feedback, even if they are actually of similar quality. However, as the volume of public review increases through efforts like eLife’s policy of posting reviews on preprints, preprints with public reviews are likely to become normalized.

On the other hand, another concern is that public review may cause referees to adopt a more collegial approach to their feedback. While this likely has positive consequences, it may also cause some reviewers to “pull punches” and avoid or soften criticism, especially if reviewers are compelled to sign their reviews. At the same time, some of the most well-circulated examples of preprint feedback (for example, the deluge of comments on an infamous, and later withdrawn, preprint) include readers pointing out serious flaws in the work. This suggests that some readers are willing to call out serious problems, at least when the stakes for public health and understanding of science are high.

Open participation in peer review and feedback, like other forms of review, is vulnerable to bias and gaming. Similar to the manipulations that occur in peer review rings that function within journals, reviewers could enlist colleagues to leave favorable comments without properly disclosing competing interests. However, public availability of feedback would make it relatively easy to identify a pattern of biased reviews as compared to those submitted behind closed doors to a variety of journals. Furthermore, scientists want and need critical but fair feedback. Merely rubber stamping papers will harm the reputation of the peer reviewer or service.

Even without malicious intent, readers are more likely to see, read, and ultimately highlight and comment on preprints within their network. Institutional, geographic, or other disparities in preprint review can amplify Matthew effects.

These issues are discussed further in other FAQ listed below.

How can preprint review contribute to equity?

Preprints have great potential to democratize access and production of knowledge. However, due to availability bias, researchers tend to be most aware of work occurring within their own network; highlighting and sharing only these preprints can contribute to the Matthew effects and limit readers’ exposure to a sliver of available science. Looking outside of the obvious candidates when highlighting or amplifying preprints can help. You can:

- Search regional servers. Because some servers may overrepresent certain countries (Abdill et al., 2020), check regional preprint servers such as AfricArxiv and RINarxiv to find papers that may be less visible to your colleagues outside those regions, or include the names of specific countries in your search to find work originating from those settings.

- Publish and search preprints in various languages. An increasing number of preprint repositories accept submissions in more than one language. Make strategic searches by keywords in English and their translations to your mother tongue. Check if a preprint already has one or more translations (of the abstract) available. For more on the importance of multilingualism, see the Helsinki initiative.

- Find preprints that have yet to be blogged or tweeted about. For example, when looking through bioRxiv for her #365preprints project , Prachee Avasthi recommends selecting preprints that have yet to be tweeted. For the same reason, avoid using Twitter as your only source for finding preprints.

Professional review coordinators and preprint feedback services can also play a role by adopting policies and practices that encourage equity and inclusion.

Thanks to Dasapta Erwin Irawan, Jo Havemann, and Stefano Vianello for their input.

What’s it like to receive public preprint feedback?

Click on each tweet below to read the full thread on Twitter.

Jacob Scott summarizes manuscript changes prompted by feedback from Twitter:

A colleague who provided feedback to Dan Quintana became his coauthor on a revised version of the preprint:

Amanda Haage incorporated public feedback into her revised preprint about the faculty search process:

Michael Marty reacted to a journal-independent preprint review process coordinated by James Fraser:

For readers & reviewers

How can I provide constructive feedback?

Many of the same principles that guide constructive private review apply. However, we’ve drafted the FAST (Focused, Appropriate, Specific, Transparent) principles, to help guide authors, reviewers, and other stakeholders contribute constructively to public preprint feedback. Briefly:

- Focused: on the science, not the individuals or journal to which the paper might be submitted. Respect the existing focus and scope of the paper.

- Appropriate: use a polite, respectful, and constructive tone. Reflect on your motivations and biases. Behave with integrity, following ethical conduct expected in all research activities. Call out inappropriate behaviors or critiques.

- Specific: consider whether claims are supported by the data and provide clear, actionable, and useful suggestions for the authors to improve the work. Distinguish between critical vs optional modifications.

- Transparent: post feedback publicly, sharing information on the limits of your expertise and competing interests. Acknowledge errors & credit colleagues who contributed to the review.

Where should I post this feedback?

Public preprint feedback can be posted in a variety of locations, including the commenting section of preprint servers with this functionality, social media networks, general repositories such as Zenodo and GitHub, and third-party reviewing platforms. Here are some factors to consider:

- Visibility. Using a preprint server’s commenting section is often the easiest way to ensure that your review is likely to be seen by those who view the preprint. However, not all preprint servers offer commenting functionality (see our directory of preprint servers for more details). Furthermore, some, such as bioRxiv, prominently link to tweets or 3rd party review sites. Check the server in question to evaluate these options.

- Permanence and citability. While anything can technically be cited, if your review has a persistent identifier (PID) such as a Digital Object Identifier (DOI) and relevant metadata, it will be easier for 1) others to cite your work, 2) you to include it in your ORCID profile, and 3) other services and search tools to index and display it. A commitment from the service to make their content permanently available is also implicit in the use of DOIs, though other venues that don’t offer DOIs may also have plans in place for long-term preservation.

- Limitations on participation. While commenting sections, social media, and many reviewing projects allow anyone to post, be aware that some reviewing projects aren’t open to all: they may function as journal clubs or use editors to identify reviewers.

Does it have to be signed?

No. While policies differ across preprint server comment sections and reviewing venues, it’s possible to post anonymously or under a pseudonym at many of them.

How can I gain credit or recognition for public preprint review?

The ASAPbio Preprint Reviewer Recruitment Network allows you to volunteer for consideration for the editorial boards and reviewer pools of dozens of journals, submitting preprint reviews and comments you’ve written as work samples.

Currently, formal systems for specifically recognizing this scholarly activity are few and far between. Nevertheless, you can also add preprint reviews you’ve written to your CV under a section for peer review and commentary, or list it on your ORCID profile. (When viewing your profile while logged into ORCID, you can select “Add works” to the right of the “Works” section, selecting “Other” for both work category and type.)

What should I do if I see a misleading or inappropriate review?

If you see a review that is disrespectful, ad hominem, or merely mistaken, please reply to the review to point this out. Bystanders have an important role to play in correcting inappropriate or misleading reviews, making the literature easy to understand for everyone.

Especially if you are associated with the authors in any way, it is prudent to declare any competing interests up front when posting or refuting comments.

For further discussions, please see the FAST principles for public preprint feedback.

How can public preprint feedback be used in education?

Preprint feedback can be a way to help students, such as undergraduates, learn about the scientific process by actively taking part in peer review. As described in the summary of a #FeedbackASAP breakout session organized by Mugdha Sathe (UW), Rebeccah Lijek (Mount Holyoke), and Daniela Saderi (PREreview):

“Involving ECRs in preprint review improves their scholarly skills of critical thinking and writing, and it also broadens students’ understanding of science to include community engagement. Since preprints and their peer reviews play a major role in new developments related to COVID-19, understanding of these processes among non-specialists has become even more crucial.

Preprint review is a meaningful component of the scientific process, and as such, Lijek is studying whether participating in it improves ECRs’ sense of belonging in science. She posits that preprint review provides an exciting new avenue to support the persistence of BIPOC, women, and gender minorities in STEM.”

Public preprint feedback can also come from journal clubs, both those organized informally within a lab and those that are part of graduate education. Two examples of these courses were discussed by James Fraser (UCSF) and Fabio Palmieri (University of Neuchâtel) in a session at #FeedbackASAP (view the slides).

Dyche Mullins has integrated public preprint review into a class for graduate students at UCSF. The project description contains information about implementation as well as a summary of the FAST principles to guide students toward a positive review culture.

For authors

Why would I want criticism in the open?

Inviting open feedback on your work can have several benefits.

First, open feedback can ultimately make work more robust. As science becomes increasingly interdisciplinary, many papers cannot be reviewed adequately by a single “expert.” Open feedback from a scientific community can be more robust than private comments shared by a small number of reviewers. Not only is the former strategy capable of engaging a larger group of researchers, it also benefits from interactions among them. One reader may ask a question answered by another, and reviewer comments can be independently verified or challenged by others.

Second, publicly rebutting criticisms allows authors to add context to critiques that may have damaging consequences when raised privately. For example, a journal editor may reject a paper or decide not to send it out for review on the basis of opinions authors only hear about after the decision has been made. Similarly, critiques of work in study section or by a hiring or promotion committee are likewise hidden from authors. Many of these critiques and concerns could also occur independently to anyone reading the paper. If authors have the opportunity to rebut criticisms in the open, decision makers could take those arguments into consideration. Thus, criticism on a preprint is an opportunity to weigh in on critiques that are likely to recur in the privacy of editorial triage processes, grant peer review, promotion committees, and the minds of colleagues more generally.

Finally, having publicly accessible reviews posted, especially alongside your responses, can facilitate the use of those reviews by journals, potentially reducing the need for re-review of your work.

Do I have to respond to all the feedback?

In general, it’s good practice to respond to all feedback. However, in rare cases, the line between constructive feedback and trolling may blur. As Hilda Bastian points out:

“Well-founded criticism, no matter how small the point it addresses, or how nastily packaged, is a gift. It comes with the power to sharpen our thinking, science, and communication skills.

But sometimes, it’s not well-founded, or it’s rehashing a point that has been made over and over (sometimes even hundreds of times!). And sometimes, it’s ill-informed and/or straight up trolling. That’s tough territory when you’re the target, or you see others being targeted and fear for yourself.”

For further discussions, please see the FAST principles for public preprint feedback.

What should I do if I receive misleading or harmful feedback?

In most cases, posting a courteous and rational reply will help to correct the record. In the rare cases where such a reply leads to additional harmful feedback, consider reporting to moderators, where available, or flagging the comment as inappropriate on Twitter or Disqus.

For further discussions, please see the FAST principles for public preprint feedback.

Can public preprint feedback hurt my career or my chances of publishing in a journal?

With public preprint feedback, criticisms that would otherwise only be raised privately (for example, in the minds of your readers, or in funder and journal peer review) are brought out into the open. This can alert readers to real or perceived issues they would not otherwise have been aware of.

On the other hand, if you publicly respond to the criticism, you gain an opportunity to address questions and worries that might have occurred to readers even in the absence of public feedback. This presents an opportunity to not only strengthen your paper, but also to preempt private criticism that could arise at a journal, grant review panel, or hiring committee.

How do I request public feedback?

By explicitly requesting public feedback on your preprint, you’ll help readers feel welcome to share their comments. Specifying the time frame and type of feedback you need can ensure the input you get is more likely to be constructive.

To request feedback, tweet out a request, or leave a comment on your own preprint inviting public review. Here’s a suggested format:

“My co-authors and I welcome public feedback on our preprint, ideally by [DATE]. We are especially interested in [statistics, etc].”

For journals, editors, and publishers

How can I use preprint feedback in my editorial process?

As an editor, feedback on a preprint (whether in the form of formal peer reviews, social media chatter, or comments on the server) can help provide context around a paper.

See “How can I find it?” for tips on locating this feedback. Searching for author responses is also critical.

Preprint reviews should be read critically with the knowledge that many venues are unmoderated and/or do not require the disclosure of competing interests.

As a journal, editor, or publisher, how can I encourage public preprint feedback?

There are several ways for journals, editors, and publishers to encourage public preprint feedback.

- Encourage editors to look for feedback and use it in their editorial process. You can also announce this policy in your instructions to authors, encouraging them to respond to public feedback where it appears.

- Post reviews on preprints. eLife now exclusively reviews preprints and posts public reviews on them. The SciELO journal Educação em Revista enacted a similar policy in February of 2021.

- Participate in the Preprint Reviewer Recruitment Network. This pilot seeks to use public preprint feedback as work samples, helping journals find new reviewers or editorial board members.

For funders and institutions

How can I encourage public preprint feedback?

As proposed by Bodo Stern (HHMI) at #FeedbackASAP, funders and institutions can endorse the inclusion of refereed preprints as peer reviewed articles in applications for funding and employment. Researchers could list their preprint along with the peer reviews it has received as a publication.

Funders and institutions can also encourage researchers to list preprint reviews they have written as scholarly outputs. These outputs could be cited with a tag that identifies them as reviews of other work, or they could be listed under a dedicated section of the publication list.

Enabling researchers to list their refereed preprints and preprint reviews will send a constructive signal about the value of these outputs. Institutions and funders can also educate and communicate with evaluators about the assessment of these outputs to ensure they are taken into consideration for funding, hiring, or promotion decisions.